Pillar 6: Sustainability

(Design agentic systems that scale responsibly: measure footprint, reduce waste, and govern compute like a shared resource)

Sustainability is often treated as an externality in early-stage AI deployments. That approach does not hold once systems become agentic and usage scales. Agents are loops; loops scale; and scaled compute becomes a material operational and environmental factor. Sustainability therefore belongs in the architectural model—not as moral signalling, but as an engineering discipline.

The sustainability objective for agentic systems can be stated clearly:

Minimize environmental impact per unit of useful outcome, and make that impact visible, governable, and improvable over time.

This pillar is tightly coupled to cost optimization and performance efficiency because the same architectural levers typically drive all three: model choice, token volume, loop depth, tool orchestration, caching, and scheduling. Sustainability also intersects directly with context optimization and autonomy governance: if you allow runaway context and runaway autonomy, you allow runaway compute.

10.1 Sustainability Starts With Measurement: You Cannot Optimize What You Cannot See

The first requirement for sustainability is measurement at the right resolution. Without it, teams default to assumptions, public estimates, or coarse aggregate metrics that do not support engineering decisions.

Google Cloud’s published methodology for measuring the environmental impact of AI inference is a useful example of the direction the industry is moving: it proposes a full-stack approach to estimating energy, emissions, and water impact per prompt (not just “model energy”), and it reports a median Gemini Apps text prompt estimate of 0.24 Wh, 0.03 gCO₂e, and 0.26 mL of water using that methodology.

The significance is not the precise number (which will vary by system and over time); it is the implication: sustainability measurement must be anchored in real system telemetry and workload characteristics, not folklore. (Note: the author once generated the same amount of emissions in a 7 day period through his LLM usage as driving his car 110km)

At minimum, sustainable agentic systems should track:

-

token usage (input/output),

-

model routing choices,

-

tool call counts and durations,

-

loop depth (steps per task),

-

cache hit rates,

-

concurrency and scheduling patterns,

-

and—where possible—energy/emissions proxies per workload and per model

10.2 The Efficiency Thesis: Sustainability Is Mostly About Reducing Waste

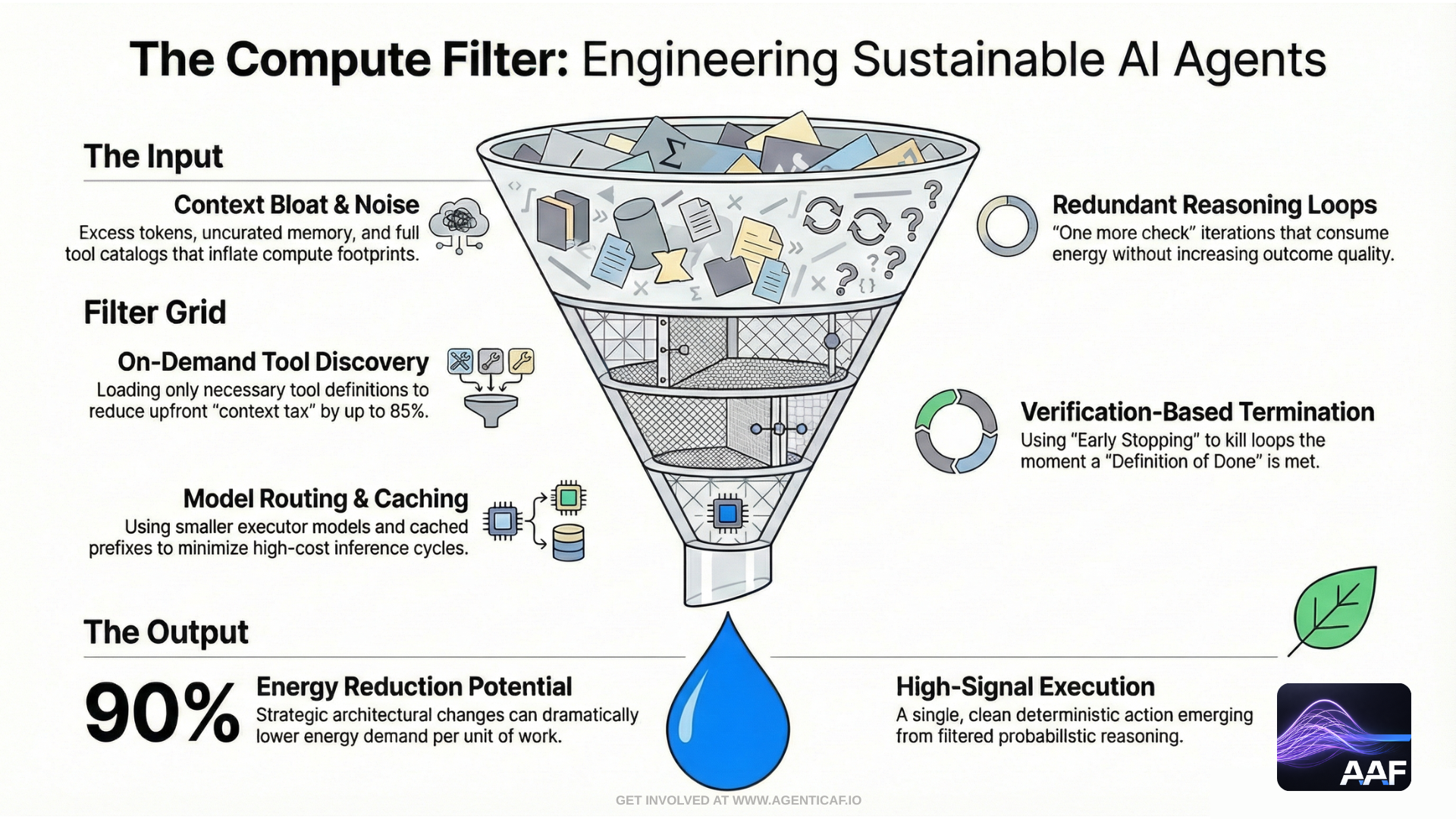

A useful framing for sustainability in agents is: reduce wasted computation.

When agents are inefficient, they waste compute in predictable ways:

-

over-long context windows,

-

repeated reasoning loops,

-

redundant tool calls,

-

multi-agent overhead without clear parallel benefit,

-

verbose outputs that are not used,

-

retry storms caused by poor failure handling.

External research supports the idea that relatively small design choices can have outsized effects. A UNESCO/UCL report argues that practical changes—such as using smaller models, reducing numerical precision in some contexts, and shortening outputs—could dramatically reduce energy consumption, with claims of reductions “up to 90%” under certain approaches.

This aligns with the design patterns already established in this document: model routing, output control, context budgeting, caching, and early stopping are sustainability controls as much as they are cost controls.

The architectural principle is:

Do not optimize sustainability as an afterthought. Engineer efficiency into the control loop.

10.3 Sustainable Agent Design: The Primary Levers

10.3.1 Right model, right phase (planner vs executor)

Sustainability improves when compute is matched to task. Using highly capable models for planning and smaller models for execution reduces unnecessary high-cost inference cycles—especially when execution involves deterministic steps.

This is the same “phase separation” logic from the cost pillar, interpreted as a sustainability pattern: fewer high-capability inference calls per unit of useful outcome.

10.3.2 Token discipline and context optimization

Context bloat is a sustainability problem because tokens are compute. In agent systems, the context window includes:

-

system prompts,

-

retrieved documents,

-

tool definitions,

-

tool outputs,

-

memory.

Reducing context volume without losing relevance—via retrieval strategies, summarization, tool discovery, and careful working sets—is one of the highest-leverage sustainability controls.

10.3.3 Early stopping and verification-based termination

A sustainable agent stops as soon as the outcome is verified. Long tail loops (“one more check,” “one more attempt”) are often low-value compute. Verification gates provide a disciplined stopping rule: terminate when success criteria are met and validated.

10.3.4 Caching and reuse (reduce repeated compute)

Prompt caching, plan reuse, and memoization reduce redundant inference. This is both economically and environmentally beneficial, because it reduces inference volume without reducing delivered capability.

10.3.5 Scheduling and workload shaping

Moving non-urgent tasks off-peak and batching work reduces peak demand and can reduce the need for over-provisioned infrastructure. For agent systems, the natural candidates include:

-

research runs,

-

indexing,

-

memory compaction,

-

evaluation harness runs,

-

reconciliation tasks.

Sustainability benefits from aligning heavy workloads to times and places where energy supply is cleaner and capacity is available, where possible.

10.4 Sustainability Must Account for Macro Constraints: Data Centre Growth Is Real

Sustainability engineering in agentic systems is not occurring in a vacuum. Data centre electricity demand is projected to grow significantly in the coming years, with AI as a major driver.

The International Energy Agency (IEA) reports projections in which global data centre electricity consumption is expected to double to around 945 TWh by 2030 in a base case, representing just under 3% of global electricity use, with growth around 15% per year from 2024–2030.

The IEA also discusses corresponding growth in electricity supply required for data centres—projecting global electricity generation to meet data centre demand rising from 460 TWh in 2024 to over 1,000 TWh in 2030 in its base case.

These macro projections reinforce the core point: as agentic systems scale, sustainability becomes a material constraint and a legitimate engineering objective. Organizations that treat it as optional will face increasing scrutiny from customers, regulators, and their own infrastructure realities.

10.5 Sustainability Governance: Make It a Control Surface

Sustainability becomes actionable when it is governed like other system constraints.

A sustainable agentic architecture should include:

1) Workload classification by environmental intensity

-

light inference tasks (summarization, extraction),

-

heavy reasoning tasks (planning, synthesis),

-

tool-heavy tasks (web research, large retrieval),

-

multi-agent tasks (coordination overhead).

2) Sustainability budgets

-

per team, per workflow, per agent,

-

aligned with cost budgets and autonomy budgets.

3) Defaults that minimize waste

-

concise outputs by default,

-

minimal context by default,

-

escalation to expensive reasoning only when needed,

-

throttling and queueing that reduce retry storms.

This ties directly to the “architecture of epistemic gates”: sustainability gates are a parallel class of gates that prevent low-value computation from quietly becoming the default mode of operation.

10.6 A Practical Sustainability Standard for Agentic Systems

A usable target statement for this pillar is:

We can explain the compute footprint of an agent task, we can measure it, we can bound it, and we can improve it over time—without compromising outcome quality.

This is the sustainability counterpart to reliability’s “repeatable outcomes” and security’s “constrain agency.” It fits naturally into the same operational discipline.

Section 10 Citations (Sources & Links)

-

Google Cloud-Measuring the environmental impact of AI inference (methodology; per-prompt estimates including energy, carbon, and water).

-

UNESCO — AI Large Language Models: new report shows small changes can reduce energy use by 90% (summary of UNESCO/UCL work; claims on energy reduction potential).

-

UCL — Practical changes could reduce AI energy demand by up to 90% (supporting summary of findings and example measures).

https://www.ucl.ac.uk/news/2025/jul/practical-changes-could-reduce-ai-energy-demand-90 -

UNESCO/UCL report (primary publication landing page).

https://unesdoc.unesco.org/ark%3A/48223/pf0000394521 -

International Energy Agency — Energy and AI: Energy demand from AI (data centre electricity demand projections incl. ~945 TWh by 2030 base case).

https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai -

International Energy Agency — Energy and AI: Energy supply for AI (projected electricity generation growth to supply data centres).

https://www.iea.org/reports/energy-and-ai/energy-supply-for-ai